Easy cache your applications in Kubernetes

There are only two hard things in Computer Science: cache invalidation and naming things.

- Phil Karlton

Problem

How many times would you have liked caching static data that never (or at least almost never) change? A classic example are labels to show in a front-end select box.

Easy solution

So where is the problem? Cache it!

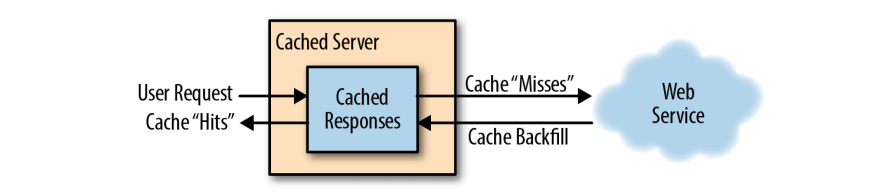

I think you know how to generally manage a cached response (or at least i hope) but let's see the right solution in a distribuited system like Kubernetes!

For our purposes, we will use Varnish, an open source web cache.

I expose 2 solutions:

- First view

- Final view

First view

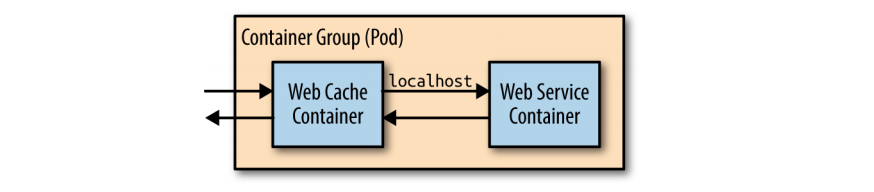

The simplest ay to deploy the web cache is alongside each instance of your web server using the sidecar pattern.

Though this approach is simple, it has some disadvantages, namely that you will have to scale your cache at the same scale as your web servers. This is often not the approach you want. Consider that every page will be stored in every replica. With 10 replicas, you will store every page 10 times, reducing the overall set of pages that you can keep in memory in the cache. This causes a reduction in the hit rate and an increment in the miss rate.

Final view

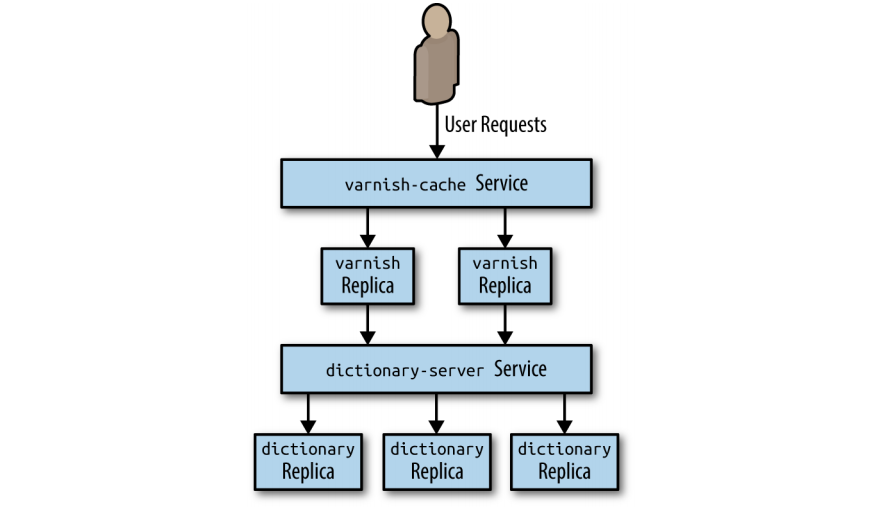

Therefore, it makes the most sense to configure your caching layer as a second stateless replicated serving tier above your web-serving tier, as illus‐ trated in Figure below.

Hands on code

Create varnish cache configuration file and create configmap.

vcl 4.0;

backend default {

.host = "dictionary-server-service";

.port = "8080";

}

kubectl create configmap varnish-config --from-file=default.vcl

Now you can create the Varnish deployment and deploy it.

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: varnish-cache

spec:

replicas: 2

template: null

metadata: null

labels: null

app: varnish-cache

spec: null

containers:

-

name: cache

resources: null

requests: null

memory: 2Gi

image: brendanburns/varnish

command:

- varnishd

- '-F'

- '-f'

- /etc/varnish-config/default.vcl

- '-a'

- '0.0.0.0:8080'

- '-s'

- 'malloc,2G'

ports:

-

containerPort: 8080

volumeMounts:

-

name: varnish

mountPath: /etc/varnish-config

volumes:

-

name: varnish

configMap: null

name: varnish-config

kubectl create -f varnish-deploy.yaml

And the relative Varnish service.

kind: Service

apiVersion: v1

metadata:

name: varnish-service

spec:

selector:

app: varnish-cache

ports:

- protocol: TCP

port: 80

targetPort: 8080

kubectl create -f varnish-service.yaml

Credits

-> Designing Distributed Systems by Brendan Burns

![[k8s] Automatically pull images from GitLab container registry without change the tag](/content/images/size/w750/2024/01/urunner-gitlab.png)